Resources

In-depth information about the edge AI and vision applications, technologies, products, markets and trends.

The content in this section of the website comes from Edge AI and Vision Alliance members and other industry luminaries.

All Resources

Top Python Libraries of 2025

This article was originally published at Tryolabs’ website. It is reprinted here with the permission of Tryolabs. Welcome to the 11th edition of our yearly roundup of the Python libraries! If 2025 felt like the year of

Why Camera Selection is Extremely Critical in Lottery Redemption Terminals

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. Lottery redemption terminals represent the frontline of trust between lottery operators and millions of players.

Quadric, Inference Engine for On-Device AI Chips, Raises $30M Series C as Design Wins Accelerate Across Edge

Tripling product revenues, comprehensive developer tools, and scalable inference IP for vision and LLM workloads, position Quadric as the platform for on-device AI. BURLINGAME, Calif., Jan. 14, 2026 (PRNewswire) — Quadric®, the inference engine that

How to Enhance 3D Gaussian Reconstruction Quality for Simulation

This article was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Building truly photorealistic 3D environments for simulation is challenging. Even with advanced neural reconstruction methods such as 3D

Quadric’s SDK Selected by TIER IV for AI Processing Evaluation and Optimization, Supporting Autoware Deployment in Next-Generation Autonomous Vehicles

Quadric today announced that TIER IV, Inc., of Japan has signed a license to use the Chimera AI processor SDK to evaluate and optimize future iterations of Autoware, open-source software for autonomous driving pioneered by

Vision Components to Present VC MIPI IMX454 Multispectral Camera Module at Photonics West

Ettlingen, Germany, January 12, 2026 — Vision Components will present its new VC MIPI IMX454 Camera Module at SPIE Photonics West, 20-22 January in San Francisco, California. The MIPI Camera features Sony’s new multispectral image

AI Glasses: Ushering in the Next Generation of Advanced Wearable Technology

This blog post was originally published at NXP Semiconductors’ website. It is reprinted here with the permission of NXP Semiconductors. With the continuous evolution of AI and smart hardware, AI smart glasses are no longer

Deep Learning Vision Systems for Industrial Image Processing

This blog post was originally published at Basler’s website. It is reprinted here with the permission of Basler. Deep learning vision systems are often already a central component of industrial image processing. They enable precise error

Free Webinar Examines Autonomous Imaging for Environmental Cleanup

On March 3, 2026 at 9 am PT (noon ET), The Ocean Cleanup’s Robin de Vries, ADIS (Autonomous Debris Imaging System) Lead, will present the free hour webinar “Cleaning the Oceans with Edge AI: The

Technologies

Sony Pregius IMX264 vs. IMX568: A Detailed Sensor Comparison Guide

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. The image sensor is an important component in defining the camera’s image quality. Many real-world applications pushed for smaller pixel sizes to increase resolution in compact form factors. To address this demand, Sony has been improving

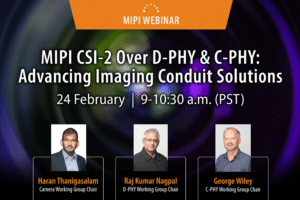

Upcoming Webinar on CSI-2 over D-PHY & C-PHY

On February 24, 2026, at 9:00 am PST (12:00 pm EST) MIPI Alliance will deliver a webinar “MIPI CSI-2 over D-PHY & C-PHY: Advancing Imaging Conduit Solutions” From the event page: MIPI CSI-2®, together with MIPI D-PHY™ and C-PHY™ physical layers, form the foundation of image sensor solutions across a wide range of markets, including

Applications

What Happens When the Inspection AI Fails: Learning from Production Line Mistakes

This blog post was originally published at Lincode’s website. It is reprinted here with the permission of Lincode. Studies show that about 34% of manufacturing defects are missed because inspection systems make mistakes.[1] These numbers show a big problem—when the inspection AI misses something, even a tiny defect can spread across hundreds or thousands of products. One

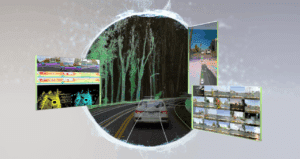

Accelerating next-generation automotive designs with the TDA5 Virtualizer™ Development Kit

This blog post was originally published at Texas Instruments’ website. It is reprinted here with the permission of Texas Instruments. Introduction Continuous innovation in high-performance, power-efficient systems-on-a-chip (SoCs) is enabling safer, smarter and more autonomous driving experiences in even more vehicles. As another big step forward, Texas Instruments and Synopsys developed a Virtualizer Development Kit™ (VDK) for the

Into the Omniverse: OpenUSD and NVIDIA Halos Accelerate Safety for Robotaxis, Physical AI Systems

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. NVIDIA Editor’s note: This post is part of Into the Omniverse, a series focused on how developers, 3D practitioners and enterprises can transform their workflows using the latest advancements in OpenUSD and NVIDIA Omniverse. New NVIDIA safety

Functions

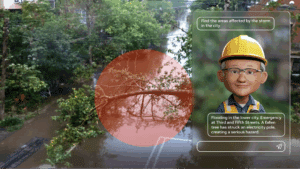

AI On: 3 Ways to Bring Agentic AI to Computer Vision Applications

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Learn how to integrate vision language models into video analytics applications, from AI-powered search to fully automated video analysis. Today’s computer vision systems excel at identifying what happens in physical spaces and processes, but lack the abilities to explain the

SAM3: A New Era for Open‑Vocabulary Segmentation and Edge AI

Quality training data – especially segmented visual data – is a cornerstone of building robust vision models. Meta’s recently announced Segment Anything Model 3 (SAM3) arrives as a potential game-changer in this domain. SAM3 is a unified model that can detect, segment, and even track objects in images and videos using both text and visual

TLens vs VCM Autofocus Technology

This blog post was originally published at e-con Systems’ website. It is reprinted here with the permission of e-con Systems. In this blog, we’ll walk you through how TLens technology differs from traditional VCM autofocus, how TLens combined with e-con Systems’ Tinte ISP enhances camera performance, key advantages of TLens over mechanical autofocus systems, and applications